Along with other reasons, it's good to have batch_size higher than some minimum. When the batch_size is larger, such effects would be reduced. This sample when combined with 2-3 even properly labeled samples, can result in an update which does not decrease the global loss, but increase it, or throw it away from a local minima. Let's say within your data points, you have a mislabeled sample. So it's like you are trusting every small portion of the data points. (The wandering is also due to the second reason below). Thus, you might end up just wandering around rather than locking down on a good local minima. To put this into perspective, you want to learn 200K parameters or find a good local minimum in a 200K-D space using only 100 samples. Large network, small dataset: It seems you are training a relatively large network with 200K+ parameters with a very small number of samples, ~100. Looking at your code, I see two possible sources. But in your case, it is more that normal I would say. Note that there are other reasons for the loss having some stochastic behavior. If you use all the samples for each update, you should see it decreasing and finally reaching a limit. This is why batch_size parameter exists which determines how many samples you want to use to make one update to the model parameters. The main one though is the fact that almost all neural nets are trained with different forms of stochastic gradient descent. There are several reasons that can cause fluctuations in training loss over epochs. Loss and accuracy during the training for these examples: 4: To see if the problem is not just a bug in the code: I have made an artificial example (2 classes that are not difficult to classify: cos vs arccos). If unspecified, it will default to 32.įor batch_size=2 the LSTM did not seem to learn properly (loss fluctuates around the same value and does not decrease). The rest of the parameters (learning rate, batch size) are the same as the defaults in Keras: (lr=0.001, rho=0.9, epsilon=None, decay=0.0)īatch_size: Integer or None. Shape of the corresponding labels (as a one-hot vector for 6 categories): (98, 6)

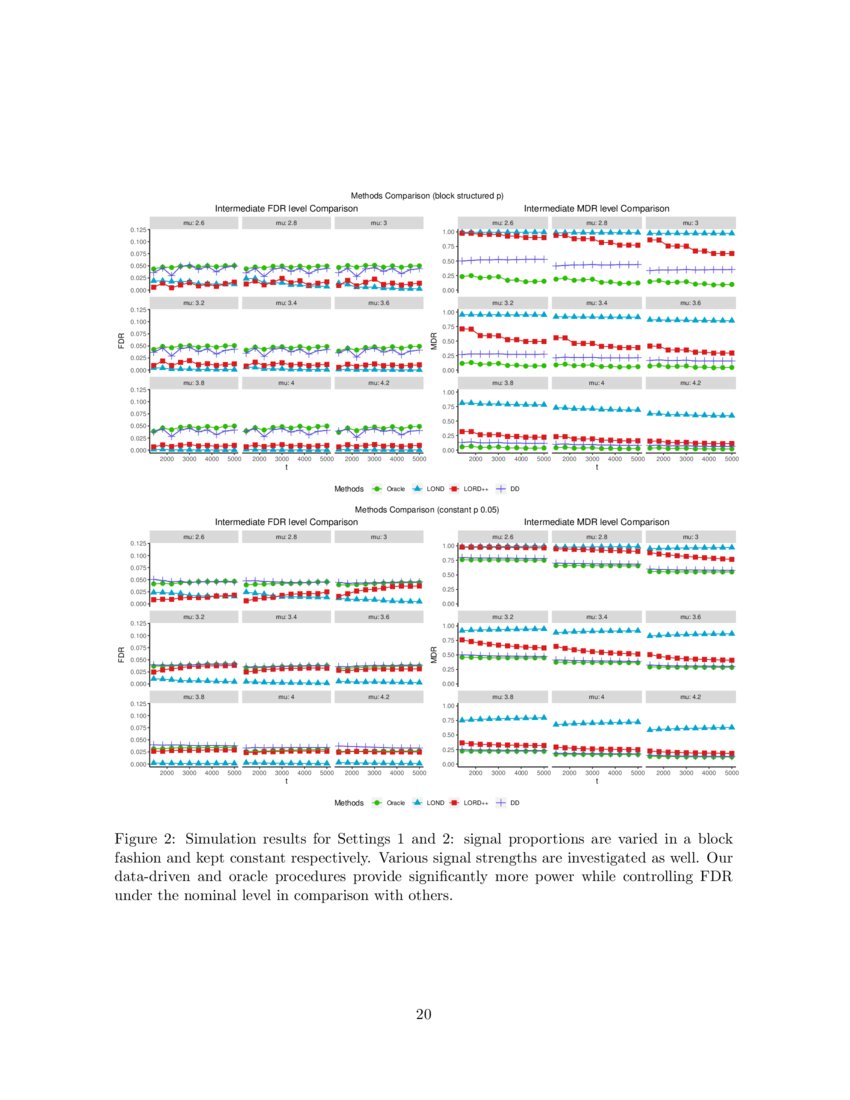

Shape of the training set (#sequences, #timesteps in a sequence, #features): (98, 100, 1) check that each of 6 classes has approximately the same number of examples in the training set.cut the sequences in the smaller sequences (the same length for all of the smaller sequences: 100 timesteps each).changed the sampling frequency so the sequences are not too long (LSTM does not seem to learn otherwise).Target variables: the surface on which robot is operating (as a one-hot vector, 6 different categories). History = model.fit(train_x, train_y, epochs = 1500)ĭata: sequences of values of the current (from the sensors of a robot). Upd.: loss for 1000+ epochs (no BatchNormalization layer, Keras' unmodifier RmsProp):įor the final graph: pile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=) (Please, note that I have checked similar questions here but it did not help me to resolve my issue.) If not, why would this happen for the simple LSTM model with the lr parameter set to some really small value? Is it normal for the loss to fluctuate like that during the training? And why it would happen? I have always thought that the loss is just suppose to gradually go down but here it does not seem to behave like that. sgd = optimizers.SGD(lr=0.001, momentum=0.0, decay=0.0, nesterov=False)īut I still got the same problem: loss was fluctuating instead of just decreasing. I have tried different values for lr but still got the same result. I also used SGD without momentum and decay. I thought that these fluctuations occur because of Dropout layers / changes in the learning rate (I used rmsprop/adam), so I made a simpler model: (Note that the accuracy actually does reach 100% eventually, but it takes around 800 epochs.) During the training, the loss fluctuates a lot, and I do not understand why that would happen.Īnd here are the loss&accuracy during the training:

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed